AWS Amplify is an amazing open-source project from AWS that helps you build secure, scalable mobile and web applications.

In this post, I will help you to create custom resolvers programmatically in AWS Amplify without relying on the AWS Console and keeping everything as code that in the past, you either needed to use the AWS AppSync console or edit AWS CloudFormation templates to implement this logic.

Why Custom Resolvers?

If you’re not familiar with AWS AppSync or GraphQL, a “resolver” is essentially a function that’s responsible for fetching data from a location to fulfil a request. For instance, a resolver might query from a database to get a stored record, or it could just compute a value directly. Resolvers are attached to fields on a “type” in a GraphQL schema. These are executed at runtime, depending on the request that comes from a client. When you’re developing GraphQL APIs, you often have to customize the resolvers to perform custom logic and implement things like data manipulation, authorisation, or fine-grained access control. Reference: https://aws.amazon.com/blogs/mobile/amplify-adds-support-for-multiple-environments-custom-resolvers-larger-data-models-and-iam-roles-including-mfa/

In our example here, We want to fetch the data from an AWS RDS PostgreSQL database instead of a Dynamo DB table.

Before you start!

You must have your Amplify project initialised (amplify init), and create a GraphQL API (amplify API add), I won’t focus in this initialising part. If you are here, you should have done this as well. Now We Can start modifying the default code created by Amplify.

Starting to code

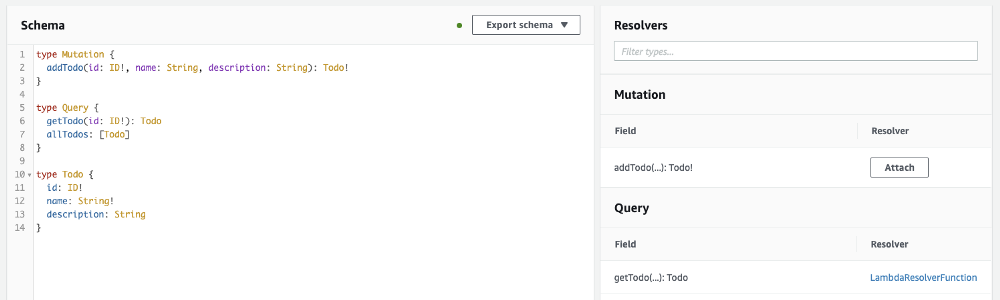

It is our example schema:

schema.graphql example

We should create our lambda that will be our Custom Resolver:

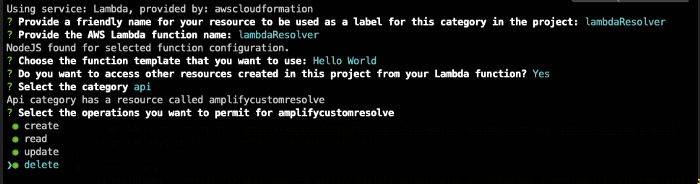

Run the command: amplify add function

We will call it lambdaResolver, as the output bellow.

Now we can start editing our Lambda Resolver:

index.js for lambdaResolver

This Custom Resolver will be responsible for fetching the data that you want, and you can get the data from any source. You need to return the exact object that your schema is expecting, for example, to mock some data you can do the following:

index.js for lambdaResolver mocking data

Make sure that you lambda has the right permission/access and/or It’s in the correct VPC and has the necessary execution role. In our case, We are fetching the data from an AWS RDS PostgreSQL located in a secure subnet. The lambda resolver should be able to reach the RDS.

VLT Templates

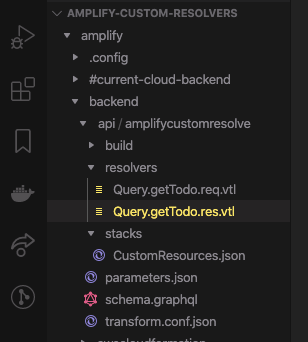

For each method, you should create a VLT template inside the resolvers folder for the response and the request.

Custom Resources (DataSource and Resolver)

We need to create custom resources for our lambda resolver, and We will add a DataSource, Resolver and a Role.

The DataSource will be our lambda resolver. Resolver will map our VTL templates and the method. The Role will permit the DataSource to execute the lambda.

CustomResources.json responsible for creating the DataSource, Resolver and Lambda Role

These resources should be included inside the CustomResources.json file inside the stacks folder.

After all the change We should push/sync our project. You can push your changes to your code repository if you are using the Amplify pipelines, or call the CLI command amplify push to run locally.

It will create all resources that We included in CustomResources.json file and will link each other. We can open the AppSync console (amplify API console), and We can see all the code that We added are there.

Conclusion

You can programmatically create all your custom resolvers, without relying on the AWS Console. Also, you can connect to any data source you want, making it a powerful tool, even connect more data sources using Pipeline Resolvers.

At DNX Solutions, we work to bring a better cloud and application experience for digital-native companies in Australia. Our current focus areas are AWS, Well-Architected Solutions, Containers, ECS, Kubernetes, Continuous Integration/Continuous Delivery and Service Mesh. We are always hiring cloud engineers for our Sydney office, focusing on cloud-native concepts. Check our open-source projects at https://github.com/DNXLabs and follow us on Twitter, Linkedin or Facebook.

No spam - just releases, updates, and tech information.

Stay informed on the latest

insights and tech-updates