Every day, millions of words are spoken across live broadcasts, events, classrooms and streaming platforms. Those words are captured in captions, used once, and then discarded.

Until now.

AI-Media and DNX set out to prove that caption data could do more — transforming real-time speech into actionable, monetisable signals. The result is a validated approach to contextual AI that operates at broadcast speed and production scale.

About AI-Media

AI-Media has spent more than two decades making the world’s content accessible. Founded in Sydney in 2003, the company is now a global leader in captioning and language technology, serving broadcasters, enterprises and governments worldwide. Today, their LEXI AI-powered platform delivers over nine million minutes of captioning, transcription and translation every month – operating at the scale and speed of live content.

That scale is exactly what made the opportunity interesting. When you process that much content, even a small shift in what captions can do creates significant commercial potential. The question was whether the technology could actually deliver it.

The Opportunity: Contextual AI for Live Captioning

AI-Media’s platform processes vast volumes of live and recorded content every day. Within that stream lies valuable, time-sensitive information: references to products, brands, concepts and events that audiences are actively engaging with in real time.

The question was whether generative AI could detect those signals instantly and respond with relevant, contextual information, without disrupting live workflows. Because AI-Media already operates within live caption workflows, this intelligence layer can be applied natively, without introducing additional latency or complexity to broadcast operations.

In a live environment, the flow looks like this:

- A presenter mentions a product or concept on screen

- The caption stream captures that reference in real time

- An AI layer detects intent in milliseconds

- The system matches it against a structured knowledge base

- Contextual information is surfaced in sync with the moment it was spoken

This transforms captions from a compliance requirement into a dynamic intelligence layer.

While initially driven by a home shopping use case, the potential extends across industries:

- Retail and home shopping: surface product details or targeted promotions at the moment of mention

- Education and training: provide definitions, references or supplementary material in real time

- Broadcast and media: enrich live content with contextual depth across news, sport and entertainment

For AI-Media, the strategic significance was clear. As Declan Gallagher, VP APAC at AI-Media, explains: “Captions have traditionally been treated as a cost. This initiative shows how they can become a real-time, monetisable asset.”

Validating whether that was genuinely achievable, technically and commercially, was the work DNX was brought in to do.

The Approach

DNX and AI-Media structured the engagement as a formal, multi-phase technical partnership, with DNX leading the generative AI assessment, architectural design and model evaluation, and AI-Media contributing domain expertise, real-world broadcast workflows and the operational context needed to ground the concepts in production reality. The collaboration was iterative by design, with both teams jointly shaping how the architecture could be responsibly integrated into an enterprise media platform.

Phase 1: Discovery and planning DNX and AI-Media’s technical team worked together from the outset. Together, they mapped the existing captioning infrastructure, identified where a contextual AI layer could integrate cleanly, and established measurable success criteria before any development began.

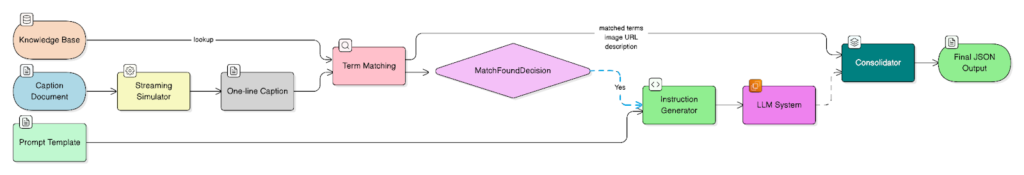

Phase 2: Prototype development and testing Using Amazon Bedrock — AWS’s fully managed service for building generative AI applications with foundation models — DNX built a working prototype around a four-layer architecture:

- Input: caption text segments fed through a streaming simulator, replicating AI-Media’s live caption flow without connecting to their production system

- Processing: Natural Language Processing to identify product and brand references within each caption line, matched against a curated knowledge base

- Enrichment: when a match is found, Amazon Nova Pro (via Amazon Bedrock) draws on the knowledge base to enrich the caption line with relevant contextual information

- Output: contextually enriched responses delivered as a structured JSON output

The team selected Amazon Nova Pro, one of Amazon’s own foundation models available through Amazon Bedrock, for its strong balance of accuracy, speed and cost efficiency. With support for over 200 languages and a 300,000-token context window, Nova Pro was well suited to processing multilingual caption content at scale. The architecture leverages Amazon Bedrock’s unified API, meaning the model can be swapped for any of the 100+ foundation models available on the platform — including options from Anthropic, Meta and others — without redesigning the system. This gives AI-Media the flexibility to optimise for specific languages, latency profiles or cost targets as the solution evolves.

The entire engagement ran on extracted sample data through a purpose-built streaming simulator. AI-Media’s live captioning services were never connected to the prototype, so there was no risk to the services their customers and viewers depend on.

Phase 3: Review and decision framework DNX delivered a complete package for AI-Media’s leadership:

- A risk framework for potential deployment

- A full performance analysis with detailed accuracy metrics

- A technical feasibility assessment for production-scale integration

- A business case with projected ROI and cost modelling

The Outcomes

The engagement moved AI-Media from concept to a validated, decision-ready capability.

Four key outcomes were demonstrated:

- It works at broadcast speed

Contextual AI enrichment is technically feasible within live captioning environments - It keeps pace with live content

The system processes fast-moving speech without disrupting the viewer experience - It is accurate enough to monetise

The solution identifies specific products and concepts with sufficient precision to support commercial use cases - It is operationally viable

The workflow integrates cleanly into existing broadcast architectures

Key Performance Metrics

- Voice-to-transcript latency: < 2 seconds

- Transcript-to-AI enrichment latency: < 600 milliseconds

- Estimated enrichment cost: ~ $0.001 per caption line

At this cost profile, contextual AI becomes economically viable at scale for organisations already investing in captioning.

The team also validated that standard models available within Amazon Bedrock were sufficient to achieve the desired outcomes, without needing to reach for more expensive or complex alternatives. That finding matters commercially: it means the solution can operate within realistic budget boundaries for broadcasters, distributors and content platforms, with the option to enhance performance further through model selection as the use case matures.

The LLM used in the evaluation is multilingual, with coverage across major languages including Spanish, Portuguese, French and Korean, making the architecture well suited to global deployment from the outset.

Adrian Britton, Head of Tech-Sales at AI-Media, puts it best: “This is the first meaningful step from a promising idea to something real and deployable.”

Why It Matters

Ideas for AI are easy to come by. What’s hard is knowing whether they’ll actually work, what they’ll cost at scale, and whether the organisation is ready to build on them. That’s precisely what this engagement answered for AI-Media.

AI-Media now has:

- A validated architecture for real-time contextual AI

- Proven performance within live broadcast constraints

- A clear commercial and operational pathway to deployment

The result wasn’t just a working prototype. It was a validated foundation: proof that the technology performs within the time and cost constraints that matter for a live broadcasting environment, and a clear map of what production deployment would actually involve.

The collaboration also demonstrates the value of the AWS ecosystem. By leveraging Amazon Bedrock and working alongside DNX, AI-Media was able to move rapidly from concept to a production-ready foundation without compromising reliability or scalability.

What’s Next

With feasibility proven, the next step is deploying the solution in a live customer environment. The initial use case originated in the Korean home shopping market, one of the most commercially dynamic broadcast ecosystems in the world, and discussions are already underway to pilot the technology in production. Over time, this capability has the potential to redefine how live content is experienced, enriched and monetised.

As AI-Media continues to expand its LEXI platform, contextual AI represents a natural evolution: transforming accessibility infrastructure into a real-time intelligence and monetisation layer.

Want to explore what AI could do for your business?

DNX helps organisations move from idea to validated capability, fast and without the guesswork.